Hi, I'm Terri, and I'm here to talk about whether we're excluding some very important people when it comes to web security.

The first thing you need to know is that...

And of course you knew that, because we all know the Internet is actually run by cats.

... and we all know that cats aren't programmers; they're artists.

Seriously, though, many of the people who do web design professionally are artists, not programmers. You can see this through the job titles used and other services offered by many web design firms.

And then there's all the people who make pages but aren't professionals. Cat blogs, community sports team sites, small church websites, etc. are often made by non-professionals.

And in many ways, it's fantastic that all these people can make web pages. Web 2.0! Sharing! Communication! But the problem is that web security is designed for programmers.

So, for the purposes of visualization, let's pretend that a web page is like a car...

Thus we can imagine web security issues like cross-site scripting and cross-site request forgery are sort of like getting gremlins in your engine.

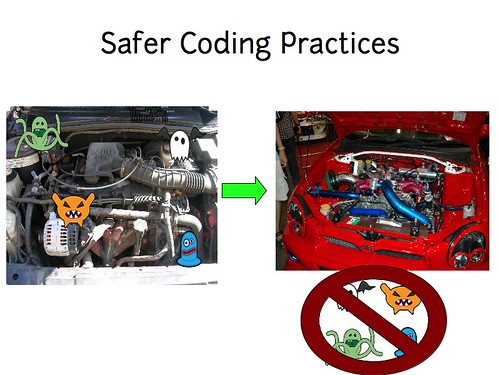

With this analogy in mind, let's look at some of the best tools we have for fixing websites:

The big one is safer programming practices. You take your existing website, and replace it with a new, gremlin-proof one. This is pretty programming-intensive, much like you'd need some serious mechanic skills to replace your entire car engine.

Then there's tainting or data flow analysis, which allows you to trace the path of the gremlins through your engine...

But once you've done that, you still have to patch the code so that the gremlins can't cause problems. Programming!

We've got known exploit detection, such as web application vulnerability scanners and web application firewalls. They tell you exactly where and what kind of gremlins you have.

But while they might protect you for a time, best practice still says you should fix your code.

And then there's the cool mashup protections which help you fix your code to provide isolation between components so that the gremlins can't breed in your engine. But they mostly involve a lot of coding to implement.

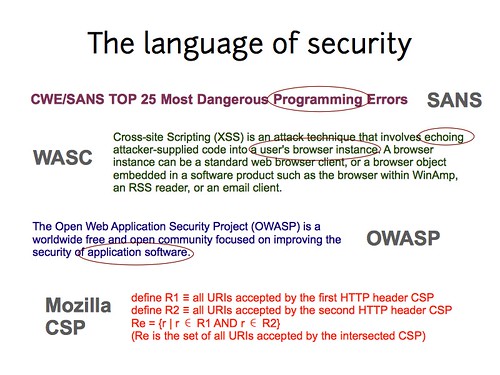

Even the language of security is heavily oriented towards programmers. The documentation for Mozilla CSP even includes set theory notation! Not exactly friendly for artists.

Some of the organizations that do the best job of communicating (web) security flaws tend to be intimidating to non-programmers, and really send the message “If you're not a programmer, this isn't for you.” This is not the message we want to send!

Because non-programmers really do need security.

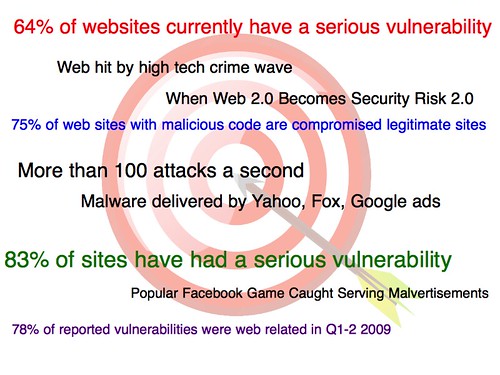

The web is a big target, and attackers aren't limiting themselves to big sites – automated attacks make it worthwhile to compromise even smaller targets. Lots of attackers are interested in sending spam, SEO, evading blacklists, etc. all of which can utilize smaller sites. And the attacks aren't always where you'd expect: Did you know your Facebook account is currently worth more on the black market than your credit card?

But if you're thinking “So, we just let the designers design and handle security at the programming layer below,” you're missing two important points:

First, smaller sites may not have anyone who can handle security, period.

And second, the design of a page actually affects the security of a page. For example, if you put an advertisement on a page with a form, you've just given that advertiser or advertising server access to your user's data. Programming under the hood can't fix that; it's done on the client side. A lot of “small” sites will use a variety of cut-and-paste code that they found elsewhere, increasing their risks even though they may not realize it.

So... that's not terribly good. What can we do about it?

Before we propose any solutions, we need to keep in mind that the cost/benefit ratio for smaller sites may be very different from what we expect. Users will reject security advice if it's more costly to implement than their risks are. And for non-technical site creators, the cost of learning security may be months of additional time, personnel, and money spent on training. Whereas how much risk is there of your community sports team website getting compromised? It may not be clear, and it may not be easy to translate into dollars.

So the first thing we can do is provide a more secure environment. The same origin policy already provides some basic protection to websites, and it's something designers just accept as part of the web infrastructure.

When I put together these slides, I didn't have any other ideas of what to do, but I've now seen a presentation that suggested some security restrictions that would have minimal impact on the top 100,000 websites but could improve security. (The paper is titled “On the Incoherencies in Web Browser Access Control Policies”)

It'd be really handy if graphical tools like Dreamweaver could generate secure mashups. I even talked to some students from the University of Virginia who are working on small policy additions to Ruby on Rails that could provide security – we need more work like this!

Education is also a big deal: people won't bother with better security if they don't understand the risks, and they won't fix problems correctly if they don't understand the solutions. But we have to be really careful to provide materials that make sense to the target audience of designers, and that are sufficiently short that they don't cause the costs of learning to exceed the risks.

You know how the EFF has done a great job distilling the complex privacy issues in Facebook and explaining them to the general public? We need materials like that for web security as well as privacy.

Another way we can help is by providing something akin to website first aid. If you fall and skin your knee, you know enough to wash out the wound, maybe put a bandage on it. You don't need to be a doctor to help your daughter if she trips in the playground. But right now you need to be a website surgeon to handle any security!

There's already some neat things out there: The Origin: header provides protections against XSRF with minimal effort. I worked on a system called SOMA which provided additional controls over includes in websites. But the risk is in letting these minimal interventions get too huge to be useful for average websites. I'm not a huge fan of Mozilla CSP because it's getting just too big for a quick fix. We need to put a lot of thought into optimizing policy and other solutions use for common cases and less into flexibility for unlikely edge issues.

And of course, it'd make our lives a lot easier if we could provide more separation between security and design so that design choices wouldn't necessarily compromise your security.

If we had more separation between security and design of web pages, we could offload security to others. For example, the person in an organization who may care most about security are your systems administrators, because they're the ones who get woken at 4am if something goes wrong, and they're the ones who have to clean up the mess.

We may even want to consider offloading security to the users: they're the ones whose data is most at risk, and they're willing to install virus scanners and even NoScript to try to protect themselves: surely we could do better there.

And finally, there's always the option of hiring outside security experts. The costs currently are prohibitive for smaller sites, but if basic security were easier, maybe we could make this more reasonable.

One thing I've been working on is a visual system for defining security policy, so it can be integrated with design tools and so security can be articulated in a language designers already understand. I'd be happy to talk more about it if you're curious.

In conclusion, while we're doing some good work in web security, we're really limiting our impact if we don't reach out to the broader range of folk who create web pages. Making web security all sound complex, time consuming and hard at all levels may be great for our job security, but it isn't the best way to go about actually making the web safer for the world!

Edit: Although I was unaware of this when I wrote the paper whose title is used in this blog post, apparently "No Website Left Behind" is trademarked by Cenzic.

3 comments:

Inspiring Presentation.

I've never seen one like that before.

Congrats.

If you're not a programmer, you shouldn't be exposing your code to hostile users. If you want to customize your website beyond playing with the layout and static content, hire someone to do it. Computer programs are the most complicated "devices" created to date, and cut-and-paste doesn't change that.

Really separating design and code, to the point where the designer can't inject code deliberately (let alone accidentally), is about the only approach that I can see having a chance of really working. That's what Wikis and web-forums do, using a markup language that's deliberately secure and gets converted to a subset of HTML. Yes, it means you can't install the web equivalent of a turbocharger without hiring a programmer... but then, most people wouldn't try to install a turbocharger without hiring a mechanic: and that's in many ways a much simpler job.

"Shouldn't" and "aren't" are very different things!

The point of this presentation was to remind folk that whether they should or not, our current setups do allow non-technical page creators to impact security. Separation is a powerful tool, but it's quite weak if there's programmer error on the supposedly secure system side, so it's not really enough by itself.

Post a Comment